Welcome to DGSonicFocus and David

Griesinger's home page!

Privacy: DGSonicFocus.com and David Griesinger.com are both read-only

websites. We do not request or gather any kind of data from users of these

sites. We do not and will not pass any data which we might have inadvertently

obtained to third parties of any type.

Update March 7, 2022

David Griesinger was invited to give a Zoom lecture to The VSA Oberlin

first Fridays Online Seminar on March 4, 2022. The group is primarily concerned

with the sound and creation of violins, but Fan Tao, the organizer of the

seminars, thought the group would be interested in the physics behind our

ability to hear the subtle differences between instruments.

The talk I presented was entitled: “The physics of auditory proximity and its

effects on intelligibility and recall.”

The talk is principally about the importance

of the amplitude waveforms of speech and music that enable us to separate

multiple talkers or multiple instruments from each other and from noise. These

waveforms are key to the cocktail party effect, and make music more engaging. But

reflections – especially early reflections – can randomize the phases of speech

and music, and eliminate the ability to separate sonic streams. Music is less

engaging, and attention in classrooms suffers. There are multiple audible

examples in the talk that show these effects.

The

slides and examples are here:

DGSonicFocus starts here - Griesinger’s home page begins below!

The

author of this site is a physicist/inventor with a passion for sound, music,

and acoustics. I have worked as a recording engineer throughout my career, and

more often than not I have needed to use headphones to monitor sound quality.

But I found that all headphones sound different, none of them produce an

accurate frontal image, and the spectral balance never matched the spectrum of

a frontal loudspeaker system.

This

problem is well known, but why does it occur? Frontal localization in both

azimuth and elevation is immediate and accurate with natural hearing, but is

poorly perceived with headphones. Small variations in the left-right balance

that mimicked head motion did create a frontal image, and head trackers became

essential for virtual reality systems. But it is trivial to show that head

motion is not needed with natural hearing.

I set

out to solve this problem in the 1980s. It is well known that localization in

the vertical plane is perceived through variations in the spectrum of the sound

that correspond to the spectral differences that result when sounds move about

the head. I bought a Neumann KU-81 dummy head to see if I could achieve both an

accurate sound image and an accurate timbre by equalizing my headphones to

match the dummy. The result was worse.

I

finally realized that to reproduce sounds in three dimensions around a listener

a headphone must be equalized to match the spectrum at the eardrum of a

particular listener. And the most important direction to get right is from the

front.

I made a set of probe microphones to measure

the sound spectrum at my eardrums both from a frontal speaker, and from various

headphones. I then used a 1/3 octave equalizer to match the headphone spectrum

to the spectrum from the loudspeaker. The difference was dramatic! The sound

image was accurately frontal, and the improvement in timbre was enormous.

With

great enthusiasm I asked my friends to listen to the headphones I had so

carefully equalized for myself. My equalization did not work for them. A few

volunteered to try my probe microphones. Their eardrum spectra were quite

different from mine. They too got frontal localization when their frontal

spectrum matched the spectrum from a . But very few of

my friends wanted to stick probes into their ears.

About

10 years ago I realized that it was possible to test eardrum spectra using

equal loudness measurements. Equal loudness measurements have been intensively

studied. The key to make them work is an approximately 1 second alternation

between a tone at a test frequency and a tone at a reference frequency. Almost

everyone is capable of adjusting the perceived level of the test frequency to

match the reference, with a reliability of about +- 1dB. I made a Windows app

that automates this procedure, allowing an interested person to match a

personal earphone or bud to the spectrum of a frontal loudspeaker in about 20

minutes. We have tested this app at lectures and conferences all over the

world. It works startlingly well.

But

the perceived spectra and the equalization needed are very different for each

individual, particularly in frequencies between 800Hz and 10000Hz. In fact the

current standards for equal loudness measurements specify that at least 100

individuals need to be averaged to get a publishable equal loudness curve.

Current

hearing research models the concha and ear canal system as a simple tube. But

it is in fact a sophisticated a form of ear trumpet that increases the sound

pressure at the eardrum by 18dB (for the author) at ~3kHz.

We have measured more than 100 people using this method and they are all

significantly different. In addition, if you put an earphone near the concha

the resonances all change in unpredictable ways. The result – no headphone

reproduces an accurate frontal timbre at the eardrum, and an equalization that

works perfectly for one individual or for one headphone will not work for

another.

DGSonicFocus

Apps

I

decided to make this procedure as a free app for computers, cellphones, and

studio systems. DGSonicFocus apps use an alternating third-octave noise test to

exactly match the spectrum at a user’s eardrums to the spectrum from a

frequency-flat frontal loudspeaker. The result is startling: you perceive

frontal localization and accurate timbre without head tracking, as if you were

hearing a live performance.

The

apps first allow a user with a smartphone or a real-time analyzer to equalize a

frontal loudspeaker to be frequency flat from 500Hz to 20kHz.

The app then uses the speaker to present 1/3rd octave noise bands

that alternate between a band under test and a reference band at 500Hz. The

user adjusts the band under test to be equally loud as the reference band. When

the user is satisfied they put on a pair of earphones and repeat the equal

loudness test. The app then calculates the equalization needed so the spectrum

at the eardrum from the headphone exactly matches the spectrum from the

speaker. Once you have measured your personal equal loudness from the front,

you can equalize another pair of headphones in just a few minutes.

Rapidly

alternating between a test frequency and a fixed reference frequency is

critical! Human hearing continuously adjusts the sensitivity of the basilar

membrane as a function of frequency, which makes individual bands seem more

uniform in loudness than they really are. Rapid alternation between a reference

and a test frequency disables the gain compensation and makes accurate loudness

judgements possible.

Our

apps have an additional feature that uses crosstalk cancellation to play either

normal or binaural recordings without individual equalization. We have

demonstrated this system to sound engineers and acousticians all over the

world. If you have frontally equalized binaural recordings the reproduction can

be startlingly accurate. I personally use this system with a pair of full-range

speakers on either side of my computer monitor. On a good recording – either

standard or binaural - the image is wide, spacious, and beautiful.

Alas

when the pandemic came programming time became hard to find. There are

licensing issues with Avid and Apple, and we recently found that the latest

updates to the Android operating system may prevent our app from writing to

memory. I do not personally have the skills to solve these issues. (I would

welcome someone who was skilled with JUCE who might be able to help.)

Our

current cellphone apps work fine on older phones, and so do the apps for

Windows and Mac computers and tablets. Our VST3 app works with recent products

such as the Blue Cat Audio VST jukebox, but we have been unable to get it to

load into Soundforge 12, or Vegas Pro.

We are

working with AVID to get a license to make our app available for ProTools. A friend uses the prototype AAX app every day,

and does not like to mix without it. Finding the right people at AVID has been

more difficult than I expected. I would welcome help on this issue.

We

have decided to release the Windows, Mac, and VST3 versions of our apps. They can

be found using the following Dropbox link. If you do not see the one you need,

keep checking this site.

https://www.dropbox.com/sh/l7oxszh0dwvwjr6/AADsbLTomcogGzl6oALOcDOUa?dl=0

The

current apps include a newly-written “About” file with detailed instructions

about how to use the program. There is also a YouTube video on my YouTube

channel that can lead you through the process step by step. The process is not

difficult. Once you use the app the process becomes completely intuitive.

A word of caution: beware of driving headphones –

particularly earbuds - from the headphone jack on a laptop or desktop machine.

These outputs may not be designed to work with a low-impedance earphone. This

can create errors in sonic width and a reduction of the bass. A low impedance

headphone amp is recommended.

The original ASIO version for Windows with the older

user interface is still in a Dropbox folder, along with an earlier VST3. The

earlier VST3 appears to work in many if not most platforms. The older apps and

the older VST3 include both headphone EQ and crosstalk cancellation. The input

and the output of the older app is analog. You need an ASIO interface with an

analog input and output that can drive low impedance headphones or

loudspeakers.

There can be a problem with ground loops when using an

analog interface connected digitally to a computer. I use either an isolation

transformer for the audio input, or a 4 channel interface. With a 4 channel

interface I send audio digitally to the interface, and then loop the sound with

analog cables from that output to an analog input, and use that input for our

app. Our app allows you to assign inputs and outputs to any devices on your computer.

Here is a link to a Dropbox folder with the older

apps, some instructions, and two

binaural recordings I made in Cologne. The apps and the VST3 use the older user

interface. Be sure to read the instructions. The older VST3 appears to work

with older sound programs.

https://www.dropbox.com/sh/uvgel6xkbaq61k6/AAB1guqPFm_QGnsyU835t3iea?dl=0

There is also a YouTube video about the older app on

my YouTube channel. Please be sure to read the instructions in the Dropbox

folder. If you have any questions please send an email to dhgriesinger@gmail.com

About our headphone app

I have

received several emails asking why it is essential to alternate a frequency

under test with a reference at 500Hz. Why not just listen to frequency bands

one at a time and make them all equally loud? The answer is that human hearing

will not let you do this. Human hearing adjusts the sensitivity of the basilar

membrane as a function of frequency to make each band equally loud. With single

tones or noise bands you perceive the loudness after the adjustment has been

made. I tried many times to equalize headphone this way. It was always unsuccessful.

But you can fool the ear by alternating the test band once a second with a

reference. With the rapid alternation our hearing does not readjust the gain

for each band, and a reliable equal loudness can be obtained. I chose 500Hz for

a reference because at that frequency and below there is very little resonance

in the ear canal.

When

headphones are equalized using our method the result is accurate timbre and

frontal localization without head tracking. The app also requests that when

finding the equal loudness for the headphones you adjust the left-right balance

to make the noise bands equally loud in both ears. This provides some

compensation for minor degrees of hearing loss. One visitor who had hearing

aids asked if the app would still work. I said take out the aids and try the

app without them. He was ecstatic. “I can mix again with this!”

The difference in image and timbre with our

equalization is startling. It must be heard!

In

October 2019 we demonstrated our AAX plug-in for equalizing headphones at the

AES convention in New York City.

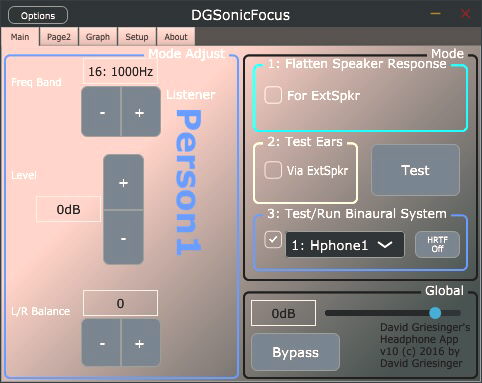

Here is the dashboard for our new apps.

Similar apps for VST3, Windows, Mac, iOS and Android

are working. We are looking for ways of making them available.

The next picture shows a possible setup for the crosstalk system. The two subwoofers are not really necessary, but they sound awesome!

David Griesinger's home page

Last

Update March 2, 2021

DG at Bash Bish falls 7/22/11

photo: Masumi per Rostad

(note the

message at the bottom of the picture)

Update February 5, 2021

On June 15 2020 I gave a web seminar titled "The physics of auditory

proximity and its effects on intelligibility and recall" through Fedele de

Marco's blog in Italy. I think it may be the best presentation I have made on

the subject of Proximity. The slides for the talk are here:

We have found that the perception of proximity is binary – a sound is either perceived as proximate or it is not. An increase in distance from a sound source of only one meter can make the difference between a proximate perception, and a muddy perception. The PowerPoints above have examples that can show this effect.

A talk I gave at the IOA conference in 2018 goes into more detail about Proximity, and includes many audio examples you might find interesting. Here is a link to the PowerPoint.

Localization, Loudness and Proximity 8.pptx

A user pointed out an error in this site concerning the code for calculating LOC, which is a measure we developed that predicts from a binaural impulse response whether a particular seat in a hall will have proximate sound – or not. We keep working to make this prediction as reliable as possible.

Experiments at the Rensselaer University EMPAC hall and subsequent lab experiments showed that reflections that arrive within 5ms of the direct sound increase the likelihood of hearing proximity, and increase the limit of localization distance, or LLC . But reflections that arrive after 7ms decrease proximity and the LLC. I added a cross-fade window centered at 6ms in the LOC code to account for these perceptions. The new code matches experiments better. The new code is here:

https://www.dropbox.com/sh/p9ufove0e5moy6s/AACL1sA853AYfzs6CgJ8MLAYa?dl=0

A bug in the earlier C language code has been fixed. The new .exe from the C code will run on any Windows computer. It gives the same values for LOC as the Matlab version. Both the Matlab and C versions include a cross-fade window for reflections that come between 5ms and 7ms. These either enhance localization or reduce it depending when they come. The C .exe now draws a simple graph that shows the build-up of reflections relative to the direct sound, similar to the graphs the Matlab code draws.

Here is the dropbox link to a folder that contains both the latest Matlab version and a C language program that does simple graphics, along with a test impulse file and instructions.

https://www.dropbox.com/sh/p9ufove0e5moy6s/AACL1sA853AYfzs6CgJ8MLAYa?dl=0

Both the Matlab code and the C executable ask for a 16bit 44.1kHz binaural stereo impulse response as a .wav file. The direct sound of the impulse should start within ten milliseconds or so from the start of the file. LOC values for the left and right ears are returned.

To use the executable code, open a command window, and type in the file name of the executable and hit return. You will be prompted for the file name of the impulse response. Hit return and the LOC values will display. A .wav file of the truncated file will be written, so you can check that the program found the impulse correctly.

12/15/19

In other news…

Matthew Neil and Michelle Vigeant presented a paper at the ICSA conference in Amsterdam describing the results of their tests for the preference of the sounds in a large number of concert halls. The experiments were carefully done, using third order Ambisonics to reproduce the sound from a large electronic orchestra. Separate impulse responses from each loudspeaker were recorded at various listening positions using a 32 channel Eigen Mike. The recorded data was reproduced in an anechoic chamber using independently recorded anechoic music for each instrument.

The result of the preference tests was surprising. At the presentation Neil claimed that seventy-five percent or more of the preference could be explained with a single perception – Proximity. The conclusion is not unexpected from my work – but was I was startled by the magnitude of the difference between proximity and all the standard descriptors of hall sound. Neil exclaimed “but there is no measure for proximity!” I pointed out that there is such a measure, LOC, that uses a binaural impulse response as an input. The measure has been published in Leo Beranek’s last three JASA papers and he gives our original formula for calculating it. (The newer code is better…)

The result was startling to me, not for the fact that people like proximity, but for the degree to which they seemed to prefer it. It is hard to get 75% or more of people to agree with anything! But in the preprint for the talk Neil et al describe that their test subjects were all classical musicians, and not simply picked off the street. The test material was classical, and the subjects knew how to listen to it. I think the selection of the subjects was appropriate. Classical music when well played and well heard is complex, emotive, and fascinating.

I have devoted much of my life to learning how to make and play back classical recordings that hold attention in this way, and learning how to design halls which can provide an even better experience for the majority of the audience. The papers on this site are intended to help other people understand some of the things I have learned. They are primarily preprints or power points, and are not published in standard journals. Hopefully this will change.

The power point in the next section might be helpful…

Listening to Concert Halls

4/9/20 - A viewer asked about a talk from the ICA conference in 2013 called "What is Clarity and how can it be measured" As of 6/13/20 I have updated the PowerPoint for that talk to include all the audio examples. "What is Clarity and how can it be measured" Some of the audio files from that talk are also in a paper from Toronto in 2013. These sound examples also work. href="Measuring%20clarity%20in%20large%20and%20small%20spaces_2.pptx">"Measuring loudness, clarity, and engagement in large and small spaces"

A researcher made a request through LinkedIn for a copy of a paper called “Listening to Concert Halls”. I had no idea when or where I had given such a talk. A search through this site and my current computers did not find this title. But a XP laptop from 2008 is still working. Searching through it brought up a presentation from a lecture I shared with Leo Beranek at the Acoustical Society special session in 2004 in honor of Leo’s 80th birthday and the 50th anniversary of the ASA. Leo asked me to share his talk. This was a great honor, and I put a lot of work into this presentation.

The .ppt of my talk had been copied from an earlier computer with a different directory structure, and the audio and video examples were not working. With considerable effort I found the missing files in other directories, and managed to imbed them into a .pptx that runs on modern computers.

The resulting power point is long but very interesting. It describes in detail my thinking about the importance of what we are now calling “Proximity,” and how it depends on the periodicity of the amplitude envelope of waveforms above 1000Hz. It also includes my first attempts to make an autocorrelator that graphically detects the physical differences between proximate sounds and sounds that are perceived as distant. The presentation includes many sonic examples that make the concepts clear.

A major goal of the talk was to show both visually and aurally why too many early reflections from the stage houses or front surfaces of halls and rooms are detrimental to sound quality. The presentation precedes my work on the measure LOC, but was for me an essential step toward finding a practical way of predicting where proximity will fail in a hall. Reading and listening to the audio examples in this PowerPoint is very helpful for understanding both proximity and its life and death in current practice.

The presentation is here:

www.davidgriesinger.com/ICA_2004 imbedded.pptx

Headphone presentation at the AES New York

Convention October 16, 2019

My presentation focused on the enormous variation in the spectra at the eardrum between individual listeners, and why duplicating their individual spectra at the eardrum is so important for headphone listening. The slides are here:

Individual Equalization at the eardrum

8/29/19 More on headphone equalization

We will be demonstrating our application for equalizing headphones to match the hearing of individual listeners at the upcoming AES convention in New York City in October. We have created an AAX plugin for Pro Tools users that allows individuals to find the precise equalization for their phones that will match the sound pressure that they hear to the sound pressure of a frequency flat frontal loudspeaker.

We have been demonstrating this procedure for many people using a Windows program. There has been considerable enthusiasm. The procedure is described in a YouTube video on my YouTube channel. If you want to try it, send me an email and I will provide a link to the software, some instructions, and a test binaural file.

I recently had a request from a person who for some reason was not able to get the Windows app to work in his system. I mentioned that it might be possible for him to do the procedure manually by using a set of noise bands that I made available as .wav files. I have decided to make these files available to others for download. I do not have time at the moment to make a set of instructions - but there is some documentation available in the folder with the files. If you would like to try this, here is a link. https://www.dropbox.com/sh/v3io71edub1mgw8/AADtJCyq54TqTaDd0lGKP0kPa?dl=0

Heyser Lecture

4/4/19 I was invited by the Audio Engineering Society to give the honorary Heyser Lecture for the March convention in Dublin Ireland. I was asked to talk more about my life than my work - but I decided to combine life and work in a way that was both entertaining and informative.

The lecture, "Learning to Listen" went very well, although my computer crashed during long introductions and I had to ad-lib for a few minutes while it rebooted. The official videographer was a no-show, but at the last minute a small video camera was found. I put together a YouTube video of the lecture with the slides.

Here it is: https://www.youtube.com/watch?v=Ms56GDmjEko. The image of me is fuzzy, but the sldes are high-def and clear.

The power points with the sound files are here:

Subjective Aspects of Room Acoustics

from the RADIS 2004 conference in Japan

3/4/19 While working on the slide show for the Heyser Lecture in Dublin I found the original files for the

talk I gave at the RADIS 2004 conference in Japan, "Subjective Aspects of

Room Acoustics". This paper presents the first example of the physical

mechanisms behind the auditory perception of "closeness", which we

now call "proximity." The paper includes an ear model that contains a

simple autocorrelator, or comb filter, that correctly distinguishes between

sounds that are perceived as "close" and those that are perceived as

"far". In the previous version of this paper on this website the

sound and video files did not play when clicked. I upgraded the .ppt to a .pptx where the sounds

are now imbedded. They will play when they are clicked.

12/12/18

There was a request from a reader for the sound files in a talk called

"Pitch, Timbre, Source Separation and the Myths of Loudspeaker

Imaging." I found that an interesting preprint for a similar talk given at

the AES conference in Budapest in 2012. It contains links to the same sound

files that will play when clicked. I have uploaded it to this site. A link to

it is here:

IOA

2017

I attended an exciting conference on concert hall acoustics organized by the

British Institute of Acoustics in the new Elbphilharmonie

building in Hamburg. We were able to hear a rehearsal of Ives' fourth symphony,

and I was lucky enough to be able to hear (and binaurally record) the concert

the next day. The concert included the Beethoven violin concerto. My

presentation was "Localization, Loudness, and Proximity"

Stereo

Bass in NYC

Shortly thereafter I presented a paper on stereo bass at the AES conference in New York City. The presentation was well attended by many old colleagues and friends. I spoke rather fast, so there was a lot of time left over for a very interesting discussion. I did not video the presentation, but I made a You Tube video of the talk using just the slides and the audio recording. You can find it on my You Tube channel, or click here:

https://www.youtube.com/watch?v=xuoDo_QKeLI ,

I made a dropbox folder which has the audio examples, and the .wav files for a test CD that has test tones in 1/3 octave frequency bands. These are very useful for testing a room for stereo bass. https://www.dropbox.com/sh/0xxrfcseeexrizn/AAB9nwIFNJgtYIwQ490g5txta?dl=0

The next day I was back in Boston for the Binaural Bash - a conference on binaural hearing organized by Steve Colburn at Boston University which is full of the latest work on the neurology of hearing. This is not really my field, but is of high interest. I decided not to make a presentation this year.

Tonmeister and the Cologne Philharmonie

Then it was off to Cologne Germany for the Tonmeister conference where I presented a similar talk to the one for the AES above. I also had the chance to demonstrate my crosstalk cancellation system to many of the friends and attendees at the conference. I had a quiet room to do it in, and the results were very encouraging. I got two tickets to the Cologne Philharmonie. Gunter Engel joined me, and we swapped seats at the half. I deliberately chose seats more distant that my previous concert there. The sound was good in the closer one, and the more distant one was noticably not as good.

After the Tonmeister

conference I decided that adjusting the crosstalk delay in samples at 44.1kHz was a bit to coarse. I added a few lines of C to run

the delay lines at 88.2 while leaving the rest of the program at 44.1. It

worked.

I will prepare a dropbox folder with the crosstalk application in the near future.

11/12/17 At the ISEAT2017 conference in Shenzhen China November 4th and 5th of 2017 I presented a new version of the talk on concert halls and headphones, once again called "Laboratory reproduction of binaural concert hall measurements with individual headphone equalization at the eardrum." The slides contain translations in Chinese.

New in the slides is a summary of the significant errors in previous work on headphones, hearing, and room acoustics. I believe that These errors have had led to serious mistakes in these fields. There is also a brief look at my current work on reproducing binaural recordings with crosstalk cancellation.

The crosstalk system is a refinement of a program we called

"panorama" in the early CP1, 2, and 3 series of Lexicon processors.

It works much better than before, and much better than I had any right to

expect. A listener who has performed the headphone equalization process can put

the headphones on and off while sitting in front of the speakers. The sound

image and timbre are almost identical. The advantages of the crosstalk system

is that it requires no individual equalization, and small rotations of the

listener's head keep the front image stable, just as with normal hearing. If

you are interested in trying this system, contact me by email.

The presentation has many audio examples at the end, so it takes considerable time to download or view. Don't give up as it is loading.

Boston Acoustical Society 2017

7/7/17 I gave two power points at the Acoustical Society Meeting in Boston, and co-chaired with Jonas Braasch a session on concert hall acoustics. It was gratifying that several of the presenters, including Chris Blair and Steve Barber echoed my thoughts about the importance of limiting the strength of the earliest reflections in as many seats as possible.

My first talk was similar to the one I gave in Berlin. This is a long file,

as it contains binaural sound clips that demonstrate the effects of early

reflections in two seats in Boston Symphony Hall, and one seat in a new hall in

Germany. The link to the power point is here:

The second talk was intended to be both amusing and informative. It first discusses why successful performance venues in the past achieved high audience engagement, and then gives examples of a few early installations of our enhancement system by other acousticians that did not adequately consider the clarity of the direct sound before attempting to enhance the reverberance.

Steve Barbar and I are proud of this system and the skill with which we

install and adjust it. Older installations using Lexicon hardware are still

running in many venues with total satisfaction. We keep improving the

algorithms, which automatically equalize themselves and run in cost-effective

hardware. We believe that when our system is carefully installed and adjusted

it is totally believable as a natural part of a space. In some spaces it can

also enhance the proximity and clarity by slightly augmenting the direct sound.

But we insist on insuring that the conventional acoustics of the space are

appropriate before attempting to improve them.

5/17/17 Verizon.net is now part of AOL, but they have decided to continue hosting the email domain. Thus the old email addresses still work. But I mostly use GMAIL because of its superior spam filter. You can contact me at any of the old addresses as well as by GMAIL.

5/17/17 The only new addition today is the power

point for the upcoming AES convention in Berlin. I am uploading it here just in

case the TSA confiscates my laptop

1/20/17 Much has happened since the last update. With the help of Nikhil Deshpande, a graduate student at Rensselaer University, I revived my work on headphone equalization. The software app was re-written to be both easier to use and more flexible. I gave papers on the subject, and wrote preprints for various conferences.

Headphones and the ICA conference in

Buenos Aires

The ICA conference in Buenos Aires was particularly successful, as I was able to demonstrate the app and the theory behind it to many colleagues, including Jens Blauert and Dorte Hamershøi. They join a long list at this point of people who have succesfully achieved frontal localization without head tracking. They also greatly enjoyed hearing my binaural recordings of performances in halls, both good and not so good.

The Tonmeister Tagung in Cologne was also exciting. Many people came up to me after my talk looking for a chance to try the app. I managed to do it with a few of them, including Georg Wuttke and Gunther Theile. But many more wanted a chance. I collected email addresses and sent them a link to a Dropbox folder with the app, the instructions, and a few binaural recordings. If anyone reading this would like to try the app, please email me at the address in the picture. I am not adding a link to the app on this site because I would like to know who is trying the app, and hopefully cajole them into sending me their data. I am quite interested in the relationship between what they measure as their equal loudness curves at moderate level to a frontal loudspeaker, and the headphone equalization they eventually find for a particular phone type.

I made a Youtube video demonstrating how to use the app. It is on my Youtube channel, and is listed in the VIDEOS tab at the top of this page.

To repeat: if you want to try the app, send me an email.

Here are two links to recent presentations at the AES convention in Los

Angeles, November 2016:

After giving this talk I went over the code I used to make the slides on the timbres that different individuals hear with the same pair of headphones. I found that the timbres that I put on the slides were inverted. I had presented the correction to the headphones, and not the perceived timbre. This error has been corrected in these slides. The timbres heard in the new slides are less obnoxious that the inverse timbres, but still not very good. I put the inverse timbres on the slides in addition, so you can hear the difference.

I also added each individual's equal loudness curve to the slides, so you can see the difference between their equal loudness and the correction needed for the headphones. The two curves are very often a close inverse of each other.

It is clear that the headphone is effectively damping their ear canal resonances. When these resonances are replaced by the headphone EQ they perceive natural timbre and frontal localization.

The next link is the companion power point on proximity given at the Los

Angeles conference in November.

The talk on headphones I gave at the Tonmeister in Cologne (which you can watch on Youtube) included the slides from the LA presentation about Boston Symphony Hall seat DD 11. After the talk in Cologne I began to wonder why there was such a large improvement of the sound in that seat when I deleted the first lateral reflection from the right side wall. I went back to the original data and re-did the whole procedure looking for errors. There was nothing really obvious. After very carefully considering the effects of the directivity of the loudspeaker, and the corresponding frequency response to be expected from the direct sound and the reflections the auditory result was slightly less obvious than the original slide, but still startling. The new audio files are in this preprint.

I have added a similar slide for the balcony seat row 2, seat 23. Again the reproduction is very close to my live recordings. The hall sound is different, and very fine. I have also added links to the slides so you can download the music files and manipulate them for yourself using an audio editor such as Audition. You can hear the effects of slight changes in the direct to reverberant ratio, or changes in the delay of the reverberation or reflections.

6/21/16 I noticed the power points for the talk that I gave on classrooms in

Indianapolis in 2014 were not on the site. They include sound files that demonstrate

the sonic difference between different seats in a model classroom. The

differences in sound quality are worth hearing.

3/15/16 The Institute of Acoustics conference in Paris was a special

occasion. The venue was the large lecture room in the new Philharmonie

de Paris. After a full day of interesting papers we were supplied with tickets

to the organ dedication concert in the new hall. I presented a poster on my

headphone equalization system on the first day:

The poster describes the advantages of binaural reproduction of hall sound, and our app for creating individual equalization of headphones. Although the poster venue was too noisy to demonstrate the process, on the last day of the conference two of the participants tried it in my hotel room and were quite excited about results.

On the next day after a morning hearing about the complexities of the design

process for the new hall I made my oral presentation.

A video of the presentation is on my YouTube channel: https://www.youtube.com/watch?v=84epTR2fyTY

There are preprints for the poster and the presentation on proximity here:

The headphone equalization software is still a work in progress, and progress is slow. But it might be useful in its current form. Email me if you might be interested in trying it. To work best you must provide a Windows computer with an at least a two channel ASIO audio interface, and a loudspeaker that can be equalized to be frequency flat to pink noise on-axis, or who's frequency response in 1/3 octave bands can be measured. The app provides the pink noise. A subject sits with their head close to the speaker axis and adjusts for their individual equal loudness curve for 1/3 octave band noise by comparing the loudness of each band to a 500Hz reference. Once their equal loudness curve is known, any headphone can be equalized by repeating the procedure with the headphone instead of speaker. The app then lets you hear pink noise or music through your own individual equalization.

8/18/15

We are currently working on an app for headphone equalization that uses an equal-loudness method. The app is getting pretty useful. I have been able to use it with several headphones and get them close enough to my own hearing to have excellent results reproducing my binaural recordings. These include my favorite Sennheiser 250-2 noise cancelling headphones, the Sennheiser 600s, AKG 701s, and a pair of insert headphones that came free with a Sony ICD SX1000 micro recorder. After eq they sound similar, but not identical. Binaural recordings heard through the circumaural phones sounded pleasant, but lacked the sense of presence and reality that were achieved with the on-ear phones and the insert phones. Circumaural phones, as are currently preferred for binaural playback have too many resonances inside the cup and concha to be equalized with a 1/3 octave approach, and too much variablilty each time you put them on to be equalized mathematically.

Prompted by this work I decided to make a short video which describes how to make probe microphones from a readily available lavaliere microphone from Audio Technica. I uploaded the video to a private YouTube address: http://youtu.be/2yYFND4lbAs

I also put on this site a folder that contains the Matlab scripts I use for making impulse responses from sine sweeps. Using the files in the folder you can make impulse responses without Matlab by using Audition, although you will not be able to invert the responses. However an inverse response IR can be made by using the parametric equalizer in Audition to manually equalize the test impulse response to flat. Tedious, but it works. The folder is in www.davidgriesinger.com/probes/sweep_folder.zip.

2/26/15 Some recent updates include the slides from

my presentation at the Tonmeister convention in

Munich last November. These are my best summary of the theory and practice of

"Presence". I made a video of the presentation, but it is not edited

yet. The title is "Presence as a Measure of Acoustic Quality". Of

lesser importance, the tab at the top of this page labeled "Awards"

is now working.

I was just looking for one of my favorite papers on this site, and could not

find it. It is the paper I presented in Banff on intermodulation distortion in

loudspeakers. But what it really is is a critique of

the entire "high definition audio" fad. It is also quite funny. If

you have not yet seen it, click here.

The first half of the presentation is the good part. In the second part I attempt to figure out why certain choral recordngs sound a bit fuzzy on my then-existing playback system. Since then I discovered that my midrange drivers did not have ideal distortion properties. With the help of Alan Devantier we replaced the drivers with a REVEL part with much lower distorion - and the choral music was significantly less fuzzy. Yea!

11/11/14

The video of my presentation to a session entitled “Architectural Acoustics,

Speech Communication, and Noise" at the ASA convention in Indianapolis is

now a video. The presentation I gave is entitled “The importance of attention,

localization, and source separation to speech cognition and recall.”

There is a link in the "Videos" tab above, and here:

https://www.youtube.com/watch?v=IebmsN9e_K8

My youtube channel is here:

https://www.youtube.com/channel/UCuc4vki_YQFfY7oCfKSm5YA

5/7/14

Two powerpoints from the ASA meeting in Providence RI, given May 5th. The first was about the effect of air currents on hall impulse responses measured with convolution methods, such as MLS or sine sweeps. I always knew that one should use short sweep lengths to keep air current data to a minimum, but I had never calculated the degree of error one should expect.

So I decided to model the effect. The result was scary. Very little air movement could have a large effect on the data. I had just finished the basic data when I learned that Ning Xiang and his students were coming to Boston to measure Boston Symphony Hall. I grabbed my tiny soundfield microphone and a small four channel recorder bicycled down to BSH. Ning was enthusiastic and decided to make measures with his two second sine-sweeps, and average 1, 2 and 10 repetitions. We expected to see differences. At the same time I measured the same seat with my soundfield and a burst from a few tiny balloons. The result was even more scary, and the results seemed very different. The powerpoint for the talk is here:

The paper should be read with some caution. After

delivering it I went back to the data that Ning and I had taken. Very careful

analysis of Ning’s data did not reveal the effects that the paper predicted. A

possible reason: Boston Symphony Hall has a consistent but not turbulent flow

of air, at least at the time we tested it. Ning and I intend to study the

effects of air currents more thoroughly in other venues.

The second paper was intended to be about measuring the

sonic property I call “Presence” In previous papers I have called it any number

of things, including “Engagement”. Readers may be already familiar with the

concept that when a sound is perceived as close to a listener it demands

attention, and that the mechanism for the perception of closeness relies on

phase information in the frequency range of the vocal harmonics.

Consequently, the paper is mostly a critique of current acoustic measures, which almost all predict very little of value for either classroom, opera, or concert hall design. Their current recommended values, if followed, will make a hall less desirable, not more. The reasons they fail are described, and suggestions are made about how to replace them with measures that might actually be helpful.

3/23/14

The big news is the addition of a bit of organization to the top of the page. You will find a link to my YouTube channel, which has three videos on it. The first chronologically was made at a talk to the local section of the Acoustical Society of America. The talk was intended as an introduction to a documentary about the organ builder C.B. Fisk. The introduction tries to make connections between the perception of organ music and how it relates to the acoustics of the venue.

The sound in both talks comes from a recorder in the audience. The organ talk was recorded from my avatar, a fully anthropromorphic copy of my pinna, ear canals, and eardrums. You will hear exactly what I would have heard if I were in the audience. A technical problem setting up the second talk caused this system to fail, but the reliable Zoom H2 worked just fine. I always record from the rear microphones, which sound much better than the front ones because they are angled at 120 degrees instead of 90 degrees.

The second talk is in two parts and goes into much more detail about the physics of sound perception and its relevance to Halls, Operas, and Classrooms. The talk is divided into two sections, each about 40 minutes long. The first section covers the theory with several important audio examples. The second section applies the theory to a great many current concert venues, and a classroom. This is followed by a very interesting question and answer period, where I get a chance to better explain the workings of my acoustic measure called LOC.

I will try to get the powerpoints from these talks up here soon. It is nice to be able to download them with the audio examples – although most of these are available in the previous talks below.

There are also links to a section of biographies – only one so far – and a section with links to some of my music and hall reviews. I think the hall reviews are still quite interesting. I hope you enjoy them.

7/19/13

The next two links are the presentations to the joint ASA-ICA meeting in Montreal and the ISRA in Toronto. The Montreal session was on whether or not it is time to re-visit the ISO3382 analysis methods. The presentation suggests that a revision to the measures for clarity is long overdue, and gives three examples of better ways to do it, and why. The sonic examples are important. They should work if clicked. "What is Clarity and how can it be measured" The Toronto presentation is better in some ways, and is here: "Measuring loudness, clarity, and engagement in large and small spaces"

There was interest at these meetings in my binaural recordings of concert halls, and in the methods I used to construct the small probe microphones. I updated the slides in the link below on binaural hearing and headphones to include more data on the construction of the microphones. "Binaural Hearing, Ear Canals, and Headphones" I also re-discovered the presentation I gave in Munich in May of 2009 on frequency adaptation. This presentation goes into the details of how the ear localizes sound in the vertical plane, and why this localization fails when timbre is not reproduced exactly. It explains why most headphone sound is perceived inside the head, and why binaural sound can be perceived correctly without head tracking if the recording and the playback is equalized at the eardrum. "Frequency response adaptation in binaural hearing"

One of the startling aspects of my eardrum binaural recordings is the excellent signal to noise ratio. It seems impossible to get such a good result from a 1/4" microphone at the end of a 6cm tube. But if you look in the above link, you will see that the concha and ear canal combine to create a ~20dB peak in the sound pressure at the eardrum just at the frequencies to which we are most sensitive. So the low noise is no surprise at all. Evolution solved the problem for us. Noise from the microphone is audible at higher frequencies where the tube resistance becomes significant. So I apply a bit of noise reduction above 6kHz.

11/12/12

Here are powerpoint slides from a talk I gave at the Lutheries - Acoustique - Musique in Paris a few weeks ago. They will be the basis for a briefer talk at the ASA meeting in Kansas City October 23rd. The title is the same as the the title of the talk below I gave in Germany - but I think the talk is better written, and there are new audio examples that should work if clicked. "Pitch, Timbre, Source Separation"

3/26/12

I received a request for an earlier and a bit more complete version of a powerpoint presentation on dummy heads, headphone reproduction, and binaural hearing. I just read the presentation over, and liked it. In 7/18/13 I made a few corrections, and added more information about how to make small probe microphones. The link is here: "Binaural Hearing, Ear Canals, and Headphones"

11/11/11

There was a request from a reader for the soundfiles

in the talk called "Pitch, Timbre, Source Separation and the Myths of

Loudspeaker Imaging." I found that the preprint for a similar talk given

at the AES conference in Budapest in 2012 contains links to the same soundfiles. So I have uploaded it to this site. A link to

it is here:

Yes, it is Fasching - but things in Detmold are still calm. The talk I gave yesterday is here: "Pitch, Timbre, Source Separation, and the Myths of Sound Localization" They are more oriented toward loudspeaker reproduction than the slides below, but some of the concepts are the same. The .mp3 of the talk is here: "The recording of the talk in Detmold"

10/31/11

The preprint for the ASA conference in San Diego is similar, but I hope better, than the one for the IOA conference in Dublin. "The audibility of direct sound as a key to measuring the clarity of speech and music"

The slides are here:"The

audibility of direct sound as a key to measuring the clarity of speech and

music"

These slides include the audio examples, which

should play after a short delay when clicked. If you want to listen to the

lecture while looking at the slides you can click here: "The

fifteen minute aural presentation" This can take a minute

or two to download and start. You might want to right click on the link and

download the file before you click on the link for the slides. Another option

is to download "www.davidgriesinger.com/Acoustics_Today/talk.zip"

and unzip it into an empty directory. This will download all the audio files,

and you can play with them as you like.

My latest preprint presents some of the reasons that although current methods of measuring room acoustics correlate to some degree with the perceived quality of the space, they do not predict that quality with reliability. The preprint was written for the Institute of Acoustics conference in Dublin in May of 2011. The title is not very descriptive of the content. As usual, I submitted the title and a preliminary abstract long before the preprint was written, and by that time I found a better way of getting the point across. "THE RELATIONSHIP BETWEEN AUDIENCE ENGAGEMENT AND THE ABILITY TO PERCEIVE PITCH, TIMBRE, AZIMUTH AND ENVELOPMENT OF MULTIPLE SOURCES"

Acoustic quality has been difficult to define, and it is very difficult to measure something you can't define. Fudamentally the ear/brain system needs to 1. separate one or more sounds of interest from a complex and noisy sound field, and 2. to identify the pitch, direction, distance, and timbre (and thus the meaning) of the information in each of the separated sound streams. Previous research into acoustic quality has mostly ignored the problem of sound stream separation - the fundamental process by which we can consciously or unconsciously select one or more of a potentially large number of people talking at the same time (the cocktail party effect) or multiple musical lines in a concert. In the absence of separation multiple talkers become babble. Music is more forgiving. Harmony and dynamics are preserved, but much of the complexity (and the ability to engage our interest) is lost. Previous acoustic research has focused on how we perceive a single sound source under various acoustic conditions. Previous research has also concentrated primarily on how sound decays in rooms - on how notes and syllables end. But sounds of interest to both humans and animals pack most of the information they contain in the onset of syllables or notes. It is as if we have been studying the tails of animals rather than their heads.

The research presented in the preprint above shows that the ability to separate simultaneous sound sources into separate neural streams is vitally dependent on the pitch of harmonically complex tones. The ear/brain system can separate complex tones one from another because the harmonics which make up these tones interfere with each other on the basilar membrane in such a way that the membrane motion is amplitude modulated at the frequency of the fundamental of the tone (and several of its low harmonics). When there are multiple sources each producing harmonics of different fundamentals, the amplitude modulations combine linearly, and can be separately detected. Reflections and reverberation randomize the phases of the upper harmonics that the ear/brain depends upon to achieve stream separation, and the ampltude modulations become noise. When reflections are too strong and come to early separation - and the ability to detect the direction, distance and timbre of individual sources - becomes impossible. But if there is sufficient time in the brief interval before reflections and reverberation overwhelm the onset of sounds the brain can separate one souce from another, and detect direction, distance, and meaning.

To understand acoustic quality we need to know how and to what degree the information is lost when reflections come too soon. The preprint presents a model for the mechanism by which sources are separated, and suggests a relatively simple metric which can predict from a binaural impulse response whether separation will be possible or not. For lack of a better name, I call this metric LOC - named for the ability to perceive the precise direction of an individual sound source in a reverberant field.

6/20/11

There have been several requests that I put the matlab code for calculating the acoustic measure called LOC on this site. The measure is intended to predict the threshold for localizing speech in a diffuse reverberant field, based on the strength of the direct sound relative to the build-up of reflections in a 100ms window. The name for the measure is a default - If anyone can come up with a better one I would appreciate it. The measure in fact predicts whether or not there is sufficient direct sound to allow the ear/brain to perform the cocktail party effect, which is vital for all kinds of perceptions, including classroom acoustics and stage acoustics.

The formula seems to work surprisingly well for a variety of acoustic situations, both for large and small halls. But there are limitations that need discussion. First, the measure assumes that the speech (or musical notes) have sufficient space between them that reverberation from a previous syllable or note of similar pitch does not cover the onset of the new note. In practice this means the even if LOC is greater than about 2dB, a sound might not be localizable, or sound "Near" if the reverberation time is too long. A lengthy discussion with Eckhard Kahle made clear that the measure will also fail - in the opposite sense - if there is a specular reflection that is sufficiently stronger than the direct sound. This can happen if the direct sound is blocked, or absorbed by audience in front of a listener. In this case the brain will detect the reflection as the direct sound, and be able to perform the cocktail party effect - but will localize the sound source to the reflector. With eyes open a listener is unlikely to notice the image shift.

With these reservations, here is the matlab code. It accepts a windows .wav file, which should be a stereo file of a binaural impulse response with the source on the left side of the head. The LOC code analyzes only the left channel. There is a truncation algorithm that attempts to find the onset of the direct sound. This may fail - so users should check to be sure the answer makes sense. The code also plots an onset diagram, showing the strength of the direct sound and the build-up of reflections. If this looks odd, it probably is. In this version of the code the box plot has a Y axis that starts at zero. The final level of a held note (the total energy in the impulse response) is given the value of 20dB, and both the direct level and the build-up of reflections are scaled to fit this value. The relative rate of nerve firings for both components can then be read off the vertical scale. So - if the direct sound (blue line) is at 12, you know the eventual D/R is -8dB. Rename the .txt file to .m for running in Matlab. "Matlab code for calculating LOC"

My latest work on hearing involves the development of a possible neural network that detects sound from multiple sources through phase information encoded in harmonics in the vocal formant range. These harmonics interfere with each other in frequency selective regions of the basilar memebrane, creating what appears to be amplitude modulated signals at a carrier frequency of each critical band. My model decodes these modulations with a simple comb filter - a neural delay line with equally spaced taps, each sequence of taps highly selective of individual musical pitches. When an input modulation - created by the interference of harmonics from a particular sound source - enters the neural delay line, the tap sequence closest in period to the source fundamental is strongly activated, creating an independent neural stream of information from this source, and ignoring all the other sources and noise. This neural stream can then be compared to the identical pitch as seen in other critical bands to determine timbre, and between the two ears to determine azimuth (localization) of this source.

The neural network is capable of detecting the fundamental frequency of each source to high accuracy (1%) and then using that pitch to separate signals from each source into independent neural streams. These streams can then be separately analyzed for pitch, timbre, azimuth, and distance. One of the significant features of this model is the insight it brings to the problem of room acoustics. All the relevant information extracted by this mechanism is dependent on the phase relationships between upper harmonics. These phase relationships are scrambled in predicable ways by reflections and noise. In room acoustics most of the scrambling is done by reflections and reverberation. These come later than the direct sound (which contains the unscrabled harmonics. If the time delay is sufficent the brain can detect the properties of the direct sound before it is overwhelmed by early reflections and reverberation.

By studying this scrambling process through the properties of my neural model we can predict and measure the degree to which pitch, azimuth, timber, and distance of individual sources is preserved (or lost) in a particular seat in a particular hall. The model has been used to accurately predict the distance from the sound source that localization and engagement is lost in individual halls, using only binaural recordings of live music. This distance is often discouragingly close to the sound sources, leaving the people in most of the seats to cope with much older and less accurate mechanisms for appreciating the complexities of the music.

The network, along with the many experiences the author has had with the distance perception of "near" and "far" and its relationship to audience engagement, is contained in the three preprints written for the International Conference on Acoustics (ICA2010) in Sydney. The effects were demonstrated (to an unfortuantely small audience) at the ISRA conference in Melbourne, which concluded on August 31, 2010. The three preprints are available on the following links. "Phase Coherence as a measure of Acoustic Quality, part one: The Neural Network"

"Phase Coherence as a measure of Acoustic Quality, part two: Perceiving Engagement"

"Phase Coherence as a measure of Acoustic Quality, part three: Hall Design"

I believe this model is of high importance for both the study of hearing and speech, and for the practical problem of designing better concert halls and opera houses. The model - assuming it is correct - shows that the human auditory mechanism has evolved over millions of years for the purpose of extracting the maxumum amount of information from a sound field in the presence of non-vital interference of many kinds. The information most needed is the identification of the pitch, timber, localization, and distance of a possibly life threatening source of sound. It makes sense that most of this information is encoded in the sound waves that reach the ear in the harmonics of complex tones - not in the fundamentals. Most background noise is inversely proportional to frequency in its spectrum, and thus is much stronger at low frequencies than at high frequencies. But in addition, the harmonics - being at higher frequencies - contain more information about the pitch of the fundamentals than the fundamentals themselves, and are also easier to localize, since the interaural level differences at high frequencies are much higher than at low frequencies.

the proposed mechanism is capable of separating the pitch information from multiple sources into independent neural streams, allowing a person's consciousness to choose among them at will. This is the well known "cocktail party effect" where we can choose to listen to one out of four or more simultaneous conversations. The proposed mechanism solves this fundamental problem, as well as providing the pitch acuity of a trained musician. The way it detects pitch also explains many of the unexplained properties of our perception of music and harmony.

September 2 found me in Berlin working on a LARES system in the new home of the Deutches Staatsoper Berlin in the Schillertheater. This was a real learning experience! The Staatsoper wanted the 20+ year old LARES equipment to be re-installed in the new location, and with the help of Muller-BBM we succeeded. Barenboim was satisfied. I was aching to install our newest version of the LARES process, but final hardware is not ready. I was able to patch in a prototype late at night, and it performed very well indeed. I could raise the reverberation time of the hall well beyond two seconds at 1000Hz with no hint of coloration. The new hardware should be available soon, and I can't wait.

On September 8th I had the honor of a two hour lecture at Muller-BBM GMBH in Munich. The slides for this lecture contain updated Matlab code for LOC measure for localization. The code will open a Microsoft .wav file that contains a binaural impulse response. The file is assumed to be two-channel. The program then calculates LOC assuming that the sound source is on the left side. The code also plots a graphic of the number of nerve inpulses from the direct sound and the number of nerve impulses from the reverberation. The slides are available here: "Listening to Acoustics"

The slides presented in Sydney and Melbourne are mostly the same as the ones in the following link, which were presented to audiences in Boston, and Washington DC. These slides are more extensive than the ones for Sydney, as more time was available for the presentation. A relatively complete explanation of the author's equation for the degree of localization and engagement, along with Matlab code for calculating the parameter are included in the slides. "The Relationship Between Audience Engagement and Our Ability to Perceive the Pitch, Timbre, Azimuth and Envelopment of Multiple Sources" contains the slides from the talks given in Boston and Washington DC on the subject.

The purpose of the research into hearing described in these slides is not necessarily to discover the exact neurlogy of hearing, but to understand why and how so many of our most important sound perceptions are dependent on acoustics - particularly on the strength and time delay of early reflections. Early reflections are currently presumed to be beneficial in most cases, but my experience has shown that when there are excessive early reflections they strongly detract from both the psychological clarity and engagment of a sound. The slides are pretty hard-hitting. They can be read together with the slides from the previous talk below - from which they partially borrow.

Thanks to Professor Omoto and his students at Kyushu University for pointing out that the equation for LOC in "The importance of the direct to reverberant ratio in the perception of distance, localization, clarity, and envelopment" was nonsense. I had inverted the order of the integrals and the log function. Many thanks to Omoto-San. I corrected the slide, and added some explanations. I also added MATLAB code for calculating it, which might help in trying to understand it.

I have been recently writing some reviews and a brief note on reverberation for the Boston Music Intellegencer - www.classical-scene.com. You might want to check out the site. As part of the effort to understand how the ear hears music I am planning several presentations at the ICA conference in Sydney, and a listening demonstration seminar in Melbourne this summer.

A sort-of recent addition to this site is a brief note on the relationship beteen the perception of "running liveness" on the independent perception of direct sound. My most recent experiments (with several subjects) show that envelopment - and the sense of the hall - is greatly enhanced when it is possible to precisely localize instruments. This occurs even when the direct sound is 14dB or more lower in energy than the reflected or reverberant energy. The note was written as a response to a blog entry by Richard Talaske. The link to Talaske's blog is included in the note. "Direct sound, Engagement, and Running Reverberation"

My latest research has focused on the question of audience engagement with music and drama. I was tought to appreciate this psychological phenomenon by several people - including among others Peter Lockwood (assitant conductor in the Amsterdam Opera), Michael Schonewandt in Copenhagen, and the five major drama directors in Copenhagen that participated in an experiment with a live performance several years ago. The idea was given high priority after a talk by Asbjørn Krokstad at the IoA conferent in Oslo last September. Krokstat gave me the word engagement to describe the phenomenon, and spoke of its enormous importance (and current neglect) in the study and design of concert halls and operas.

His insight motivated the talk I gave in Brighton the following month, also courtesy of the IoA. But the work was far from done. If one thinks one has a vitally important perceptual phenomenon that most people are unaware of, it is essential to come up with a mathematical measure for it. I was not sure this was possible, but went to work anyway. Just before being scheduled to give another talk on this subject in Munich for the Audio Engineering Society I came up with a possible solution, a simple mathematical equation that predicts the threshold for detecting the localization (azimuth) of a sound source in a reverberant field. You plug in an impulse response - and it gives you an answer in dB. If the value is above zero, I can localize the sound. If it is below 0, I cannot. Whether this equation works for other people I do not know, but Ville Pulkke and his students may be interested in finding out. Although azimuth detection is NOT the same as engagement, I believe it is a vital first step. For me, engagement seems to correspond to a result of the azimuth detection equation returning a value above +3dB.

The talk - there is no paper yet - that describes this work (and a great deal besides) is here: "The importance of the direct to reverberant ratio in the perception of distance, localization, clarity, and envelopment" The talk here is the one I presented at the Portland meeting of the ASA, and is slightly different from the one in Munich. It contains audible examples that should work when clicked. The Munich talk is here: "The importance of the direct to reverberant ratio... Munich" I found some errors in the original presentation - this is correct as of 5/14/09

There is a preprint available from the AES that contains most of the talk - but does not include the new equation. "The importance of the direct to reverberant ratio in the perception of distance, localization, clarity, and envelopment"

In January I traveled to Troy, NY to talk with Ning Xiang and his students about my current research. Part of the result was a renewed interest in publishing my work with the localization mechanisms used in binaural hearing, and due to these mechanisms the importance of doing measurements at the eardrum and not at the opening of the ear canal - or even worse, with a blocked ear canal. This talk was recently revised for presentation at the Audio Engineering Society Convention in Munich. The link here is to the version as of May 9, 2009. "Frequency response adaptation in binaural hearing"

As part of this effort I went back and scanned my 1990 paper on sound reproduction with binaural technology. I found the paper to be long, wordy, and far to full of ideas to be very useful. But for the most part, I think it is very interesting and correct. Where I disagree with it now I added some comments in red. I think it makes interesting reading if you can stick with it. "Binaural Techniques for Music Reproduction" This paper says a great many correct things about binaural hearing, and agrees closely with my current work, coming to the identical conclusions - namely that blocked ear canal measurements, and partially blocked ear canal measurements do not properly capture the response of headphones. You have to measure at the eardrum - or as I have recently developed - use loudness comparisons to find the response of headphones at the eardrum. The use of a frontal sound source as a reference is also recommended, both for headphone equalization and for dummy head equalization. I will get a copy of the AES journal paper on dummy head equalization on this site in the near future.

In November I traveled to the Tonmeister Tagung in Leipzig, followed by the IOA conference on sound reproduction in Brighton. In Leipzig I gave two lectures, one on the importance of direct sound in concert halls, and another on headphones and binaural hearing. The talk on concert halls was repeated in Brighton in a longer form as the Peter Barnett memorial lecture. I was able to demonstrate many of the perceptions with 5.1 recordings in Brighton, thanks to Mark Bailey. "The importance of the direct to reverberant ratio in the perception of distance, localization, clarity, and envelopment"

The concert hall paper had an important message: That current research on concert halls has tested the case where the direct sound is stronger than the sum of all the reflected energy. This domain is appropriate to recordings - but is inappropriate to halls, where the energy in the direct sound is far less than the energy in the reverberation in most of the seats. When laboratatory tests are conducted using realistic levels of direct sound, very different results emerge.

We find that in this case when the brain can separately detect the direct sound in the presence of the reverberation the music or the drama is enhanced, and audience involvment is maximized. But there must be a time gap between when the direct sound arrives at a listener and the onset of the reverberation if this detection is to take place. In a large hall this gap generally exists, and clarity and involvement can occur over a range of seats. In a small hall the gap is reduced, and the average seating distance must be closer to the sound source if clarity is to be maintained.

Conclusion: Don't build shoebox halls for sizes under 1000 seats!

This paper presentation included some wonderful 5.1 demos of the sound of halls and small halls with different D/R ratios and time gaps. I will include these here - but not yet. Stay tuned.

The paper on headphones goes into detail about the mechanisms the brain uses for detecting timbre and localization. It includes some interesting data on the unreliability of blocked ear canal measurements of HRTFs. Highly recommended to anyone who wants to know why head-tracking is assumed to be necessary for binaural reproducition over headphones. An obvious conclusion: if head-tracking is necessary, you KNOW the timbre is seriously in error. "Frequency response adaptation in binaural hearing"

A Finish student requested that I add the lecture slides for a talk I gave in October 2008 to the Finish section of the Audio Engineering Society. The subject was "Recording the Verdi Requiem in Surround and High Definition Video." These slides can be found with this link. However I notice that I had previously put most of these slides on this site - together with some of the audio examples you can click to hear. This version can be found below in the link to the talks give to the Tonmeister conference in 2006.

Last September I gave a paper at the ICA2007 in Madrid, which presented data from a series of experiments that I had hoped would lend some clarity to the question of why some concert halls sound quite different from others, in spite of having very similar measured values of RT, EDT, C80 and the like. A related question was how can we detect the azimuth of different sound sources when the direct sound - which must carry the azimuth information - is such a small percentage of the total sound energy in most seats?

The experiments first calculated the direct/reverberant ratio for different seats in a model hall (with sanity checks from real halls) and then looked for the thresholds for azimuth detection as a function of d/r. Some very interesting results emerged. It seems that the delay time between the arrival of the direct sound and the time that the energy in the early reflections had built up to a level sufficient to mask the direct sound was absolutely critical to our ability to obtain both azimuth and distance information. In retrospect this point is obvious. Of course the brain needs time to separately perceive the direct sound and the information it contains - else this information cannot be perceived.

The necessity of this time delay has consequences. It means that as the size of a hall decreases the direct to reverberant ratio over all the seats must increase if sounds are to be correctly localized, and the clarity that comes with this localization is to be maintained. To achieve this goal, it is desirable in small halls that the audience sits on average closer to the musicians, and the volume needed to provide a longer reverberation time be obtained through a high ceiling, and not through a long, rectangular, hall. Halls less than 1500 seats should probably look more like opera houses with unusually high ceilings. They should not be shoebox in shape. Fortunately, Boston has such a hall: Jordan Hall at New England Conservatory (1200 seats) is the best hall I know of worldwide for chamber music or small orchestral concerts. It has a near opera house shape with a single high balcony, and a very high ceiling. The sound is clear and precise in nearly every seat, with a wonderful sense of surrounding reverberaton. Musicians love it, as does the local audience.

Alas, the paper went over like a lead balloon. It was way too dry, and it seemed no one understood how it could possibly be relevant to hall design. So I quickly re-did the paper I was to present in Seville the next week to answer the many questions I received after the one in Madrid. The powerpoints for this presentation are here: "Why do concert halls sound different – and how can we design them to sound better?" Hopefully this gets the ideas across better.

At the request of a reader I have put the sound files associated with the lecture at the RADIS conference which followed ICA2004. "The sound files for RADIS2004" These include several (pinna-less) binaural recordings from opera houses, and both music and speech convolved with impulse responses published by Beranek. The lecture slides can be found with the following link: Slides for RADS2004

David Griesinger is a physicist who works in the field of sound and music. Starting in his undergraduate years at Harvard he worked as a recording engineer, through which he learned of the tremendous importance of room acoustics in recording technique. After finishing his PhD in Physics (the Mösbauer Effect in Zinc 67) he developed one of the first digital reverberation devices. The product eventually became the Lexicon 224 reverberator. Since then David has been the principle scientist at Lexicon, and is chiefly responsible for the algorithm design that goes into their reverberation and surround sound products. He also has conducted research into the perception and measurement of the acoustical properties of concert halls and opera houses, and is the designer of the LARES reverberation enhancement system.

The purpose of this site is to share some of my publications and lectures. Most of the material on the site was written under great time pressure. The papers were intended as preprints for an aural presentation. Some of them are available as published preprints from the Audio Engineering Society. The papers should be considered drafts - they have not been peer reviewed.

Several power point presentations have also been added to the site. These presentations are often quite readable and informative. In general they are more coherent than the preprint of the same material, although they are naturally not as detailed. They can be read in conjunction with the preprint of a talk. These are available as .pdf downloads, although your browser may allow you to open them with Adobe Acrobat.

Some recent work includes: